« back published by Martin Joo on December 29, 2022

Smooth Deployments with Laravel and DigitalOcean

Introduction

A few months ago I launched my first "indie" project and I wanted the whole infrastructure and deployment process as smooth as possible. Here are my top priorities:

- Push-to-deploy. I push to the main branch and production is updated.

- Zero-downtime deployments.

- 1-click rollbacks. It's pretty important to me that if something goes wrong I can roll back with just one click.

- Dev/prod parity. Staging and production should be as similar as possible.

- Minimal server maintenance. Usually, I like playing around with servers and docker commands. But in this case, I want to ship a project, a good product. And I want to be fast and responsive so I'd like to focus on things that are actually important.

- Learn something new. I wanted to build the product's first version in six weeks and one week was allocated to some "developer porn." So I wanted to learn at least one new thing that is infrastructure related.

- Deploy at least once a day. It's not related to architecture but it was an important rule to me. I wanted to deploy each and every day I worked on the project.

- Easy to scale. To be honest, I wasn't my number 1 priority or anything like that because it doesn't really matter at the beginning of a small project, but I wanted an infrastructure that can be scaled easily (a few clicks) if needed.

The Final Architecture

So the project is kind of unique since it's not a high-level business application with a nice UI or something like that. It's a code review or code analysis tool. This is how it works in a nutshell:

- Users register on laracheck.io

- They install the Laracheck app on their GitHub account

- They open a new pull request

- Laracheck will review the code, run its checks, and comment on the PR with the results

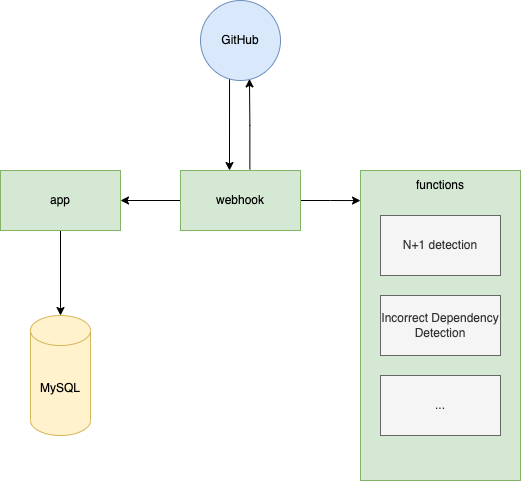

The project has three main components:

- app. This is what you see on laracheck.io. It's a PHP/Laravel application.

- webhook. Any time you open a PR in a repo where Laracheck is installed on, GitHub will send a

POSTrequest to this component. It's a nodejs application. It gathers some information using the GitHub API, and it communicates with the app component, for example, it checks if you have a subscription or you're on a free trial, etc. And finally, it starts the code checks. - functions. This is where the actual code analysis happens. Each code check is a serverless function usually written in nodejs or PHP in some cases. Yep, I'm really building a Laravel-specific code review tool using nodejs 🤷♂️

This is what it looks like:

When a POST request comes in from GitHub the webhook component will call 15+ functions, gather the results and post a comment to the PR.

So the whole project is an app on DigitalOcean App Platform. This instantly satisfies a number of my priorities:

- Push-to-deploy. Done. Each of the main components is linked to the corresponding GitHub repo's main branch. So anytime I push to main it gets deployed. I don't even have docker images. It'll get the code from GitHub and build images using standard buildpacks.

- Zero-downtime deployments. Done. When I push a commit the new docker images are being built but the old version is still available. When it's done it will use the new one.

- 1-click rollbacks. Done. Anytime a deployment fails it'll roll back to the previous version. If the deployment is successful but the code contains some critical bug, I can roll back manually in two steps: I need to run

php artisan migrate:rollbackthen I click a button. The migration part is not that great, however, it's a one-person project so it works for me. - Dev/prod parity. Done. I have two apps on App Platform. One for production (using the main branches from the repos) and one for staging (using the develop branches). When I'm working locally I push to develop so I can check everything on staging. When I'm done I rebase the main branch to develop and push it so production gets deployed. Of course, it means extra cost, but a minimal setup costs only ~$15 a month so it's pretty cheap.

- Minimal server maintenance. Done. In fact, the whole project is serverless. I don't even have SSH keys and I like it. Of course, each component has a console on the DigitalOcean UI but I rarely use them. The only thing I need is logs, but I use an external error tracking system so I don't look at endless log files.

- Learn something new. Done. I've never used serverless functions before.

- Easy to scale. Done. I can scale the

appandwebhookcomponents horizontally or vertically by clicking a button. Serverless functions are "infinite-scale" by default so I don't need to worry about them. And this is a big win for me. I never built a code analysis tool before and I wasn't sure how resource intensive these tasks would be. I mean, a lot of them are pretty straightforward but N+1 detection requires some weird things and I wasn't sure about performance. However, with functions, I don't really need to worry about it.

Other Options

Now that we saw the architecture I went with let's talk about alternatives.

Without Functions

The initial architecture didn't contain serverless functions at all. Every code check was in the webhook component itself and I didn't like it for the following reasons:

- Performance. It's a nodejs app that is single-threaded. 90% of the checks are synchronous, blocking, CPU-intensive operations. I mean, some of them are pretty lightweight, but there are more CPU-heavy tasks as well. This was my biggest fear. Of course, I can spin up a few instances from the webhook but it's more costly and I'll pay for these instances even if they are not being used to their full extent.

- Language-dependant. Usually, this is not a problem, because I build PHP stuff. However, in this project, I wanted to use nodejs for most of the checks. The reason is that I prefer Javascript/nodejs when it comes to low-level stuff. These code checks don't perform database queries, there's no ORM, they don't send notifications, and there are no queue jobs. They are much lower-level meaning that I perform AST parsing, string, and array operations, recursion, while loops, and stuff like these. In my opinion, the standard lib of PHP is a nightmare compared to Javascript/nodejs or let's say Go. I also considered Go, but I decided that I only try one new thing in this project which is serverless functions, so Go had to go. So I wanted to use node, but I knew that I'm gonna need PHP as well. The reason is that there is some amazing open-source code analysis tool in PHP such as phpmd, phpstan, and a bunch of other tools. I knew that I want to use them at some point in the future (to build some more complicated checks on top of their own such as cyclomatic complexity).

So in a nutshell this is why I went with functions. The overall refactor took like 2 days or something like that so it was easy.

docker-compose

Another simple approach would be to run the project in a docker-compose stack on a single VPS. In this case, I would have a GitHub action that builds a new image, pushes it to Docker Hub, and restarts the stack with the new image. It's not that complicated but I had some concerns:

- I didn't want to use docker at all. I have a love/hate relationship with it, but here's my default rule: use it in a team, and don't use it in a solo project. The whole project took 6 six weeks from idea to launch. Being able to launch it as quickly as possible was the most important thing. I'm 100% absolutely sure that if I used docker it would have added 10-20% to the development time in the beginning. Just some of the complexity:

- Copying and modifying at least three dockerfiles

- Provisioning a server

- Writing the pipeline

- I need to make sure that containers are up and running all the time

- But the worst part is this: if something goes wrong with docker I'm in deep shite. These debugging sessions usually take hours and are complicated.

Let's see if this would have satisfied my priorities:

- Push-to-deploy. Yes. I need to write it for myself but it's not that complicated.

- Zero-downtime deployments. No. At least not out-of-the-box. There are different solutions to this problem but it requires manual work and extra packages.

- 1-click rollbacks. No. At least not out-of-the-box.

- Dev/prod parity. 100% yes. In fact, it's even better than the current infrastructure I'm using.

- Minimal server maintenance. Nope.

- Learn something new. Nope.

- Easy to scale. Kind of. But once again, it requires extra work.

It has another drawback compared to App Platform. I need to build docker images (at least three) on my own runner (or the one GitHub provides for free) and it'll be way slower than App Platform.

So my main problem is that I need to deal with docker, a pipeline, some package, or another service that does zero-downtime deployment, I need to figure out rollbacks and I have servers and SSH keys. Docker and docker-compose is definitely good option, but for these reasons, I went with a fully-managed serverless PaaS solution.

Other

- We still have the "old school" option which is setting up an nginx server cloning the repos on the server itself and just serving the code. Basically, I have to do the same things I would have done in a Dockerfile. To be honest, this is the fastest option of all, and since I'm not in a team it shouldn't be that bad, in my opinion. The biggest drawback is that it's even more manual than spinning up a docker-compose stack and probably requires a good chunk of shell scripts.

- Laravel Forge. This is a pretty good option that replaces the shell scripts.

- Laravel Vapor. It's totally serverless so seems like a doog option. My only problem is that I don't have experience with it, and I rather use DigitalOcean over AWS. I definitely want to learn it in the future.

- Using k8s directly. It's out of the question for me.

So far I've been pretty happy with DigitalOcean and its managed services. As you can see, I was able to get everything that was important to me, and the UI is pretty nice so I like using it. In my opinion, when you want to ship a side project and you want users as fast as possible to find product-market fit it's crucial to have your deployment process as smooth as possible. I didn't spend significant time on my infrastructure (apart from the initial set up of course). I push, and the new version is out. That's it.

By the way, if you want to try out DigitalOcean serverless function or the app platform sign up using this link and you'll get $200 credit: